GREE's Security Guidelines for Generative AI: Building a Foundation for Secure Use

At GREE Tech Conference 2025, Midori Okuno, Manager at GREE Holdings, Inc., took the stage. She gave a presentation titled "Formulating 'Information Security Guidelines' and Key Points for Safe Utilization of Generative AI," discussing the development of rules for the use of generative AI within the company. The content was very insightful, and there were many aspects that AICU, which has the vision of "creating creators," should incorporate, so I would like to introduce them.

The video starts around 1:40:00

Background to the Formulation of Guidelines

The background to the formulation of the guidelines is the "rapid spread of generative AI." In 2023, ChatGPT surpassed 100 million users in a few months, becoming a "social boom called the 'AI Year'." In response, there was an increasing demand within GREE to "use it with peace of mind." For this reason, "it became clear that clear rules were necessary for the business use of generative AI," leading to the formulation of the guidelines.

Explanation of the "Generative AI Usage Guidelines"

Purpose of the Guidelines

The guidelines clearly state the following three purposes:

-

Promoting business efficiency improvement and idea creation through the use of generative AI

-

Avoiding information leakage risks

-

Clarifying rules and procedures for safe use

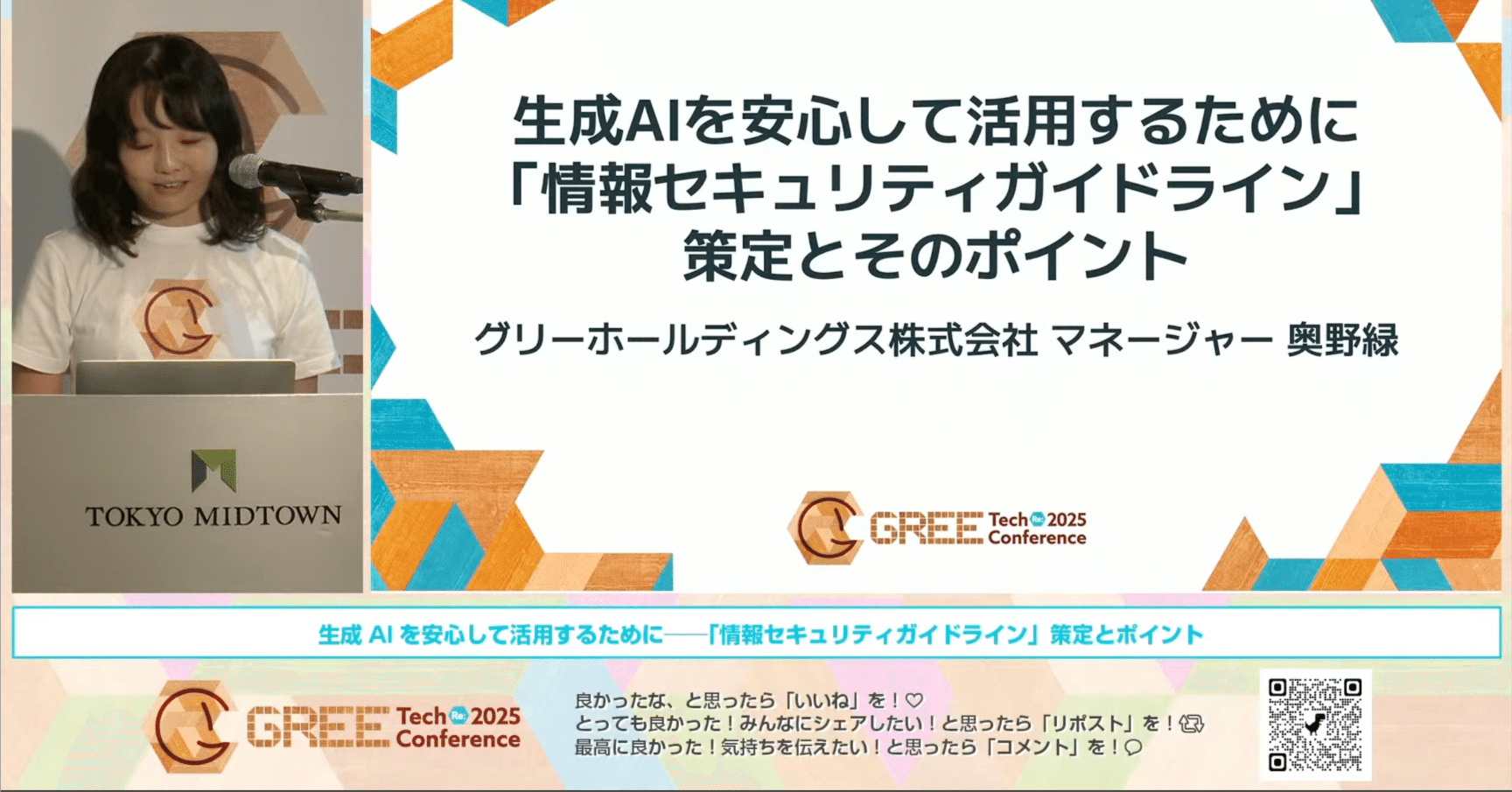

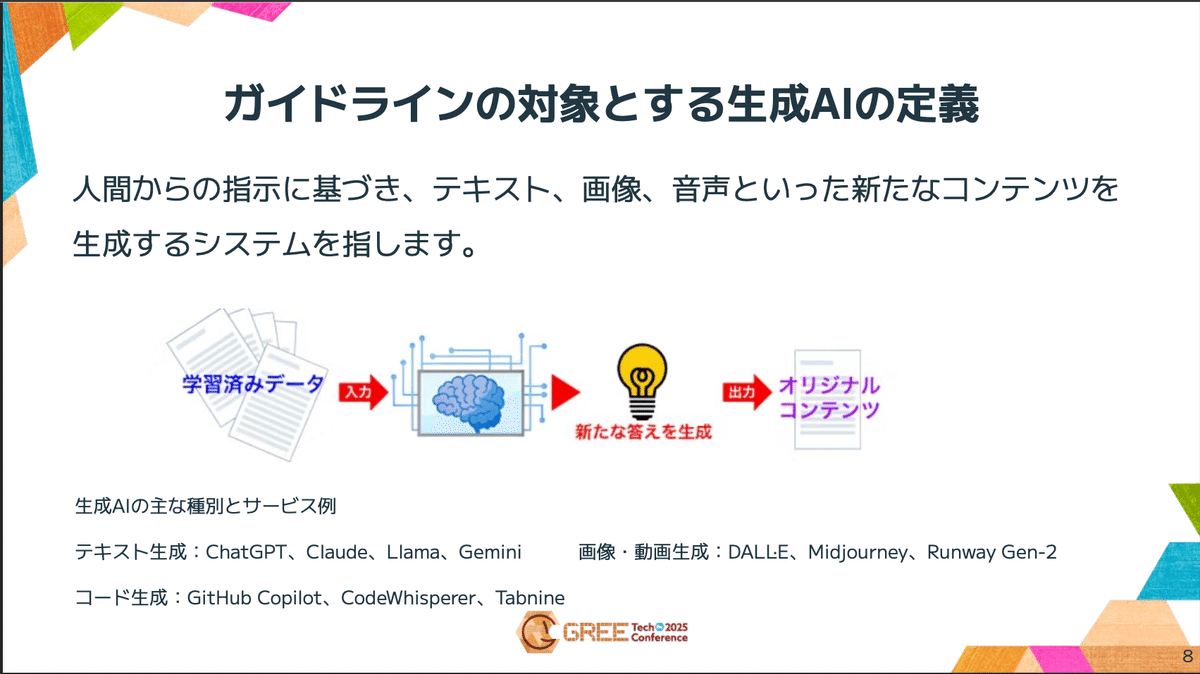

Definition of Generative AI to be Covered

The guidelines cover "systems that generate new content such as text, images, and audio based on instructions from humans." This includes text generation AIs such as ChatGPT and Claude, as well as image/video generation and code generation AIs.

"Without a Definition..." says Okuno

The guidelines also clearly state the "definition of generative AI" to be covered, after the purpose. "Without a definition..." says Okuno. The true intention is that "if the definition is ambiguous, it will cause confusion and omissions as to whether this is covered or not."

Therefore, she stated that "it is important to clarify the scope of application of the guidelines," emphasizing the importance of the definition. Incidentally, GREE's guidelines define generative AI as "referring to all systems that create new content based on instructions from humans."

Determining Usability: "Information Classification" is Key

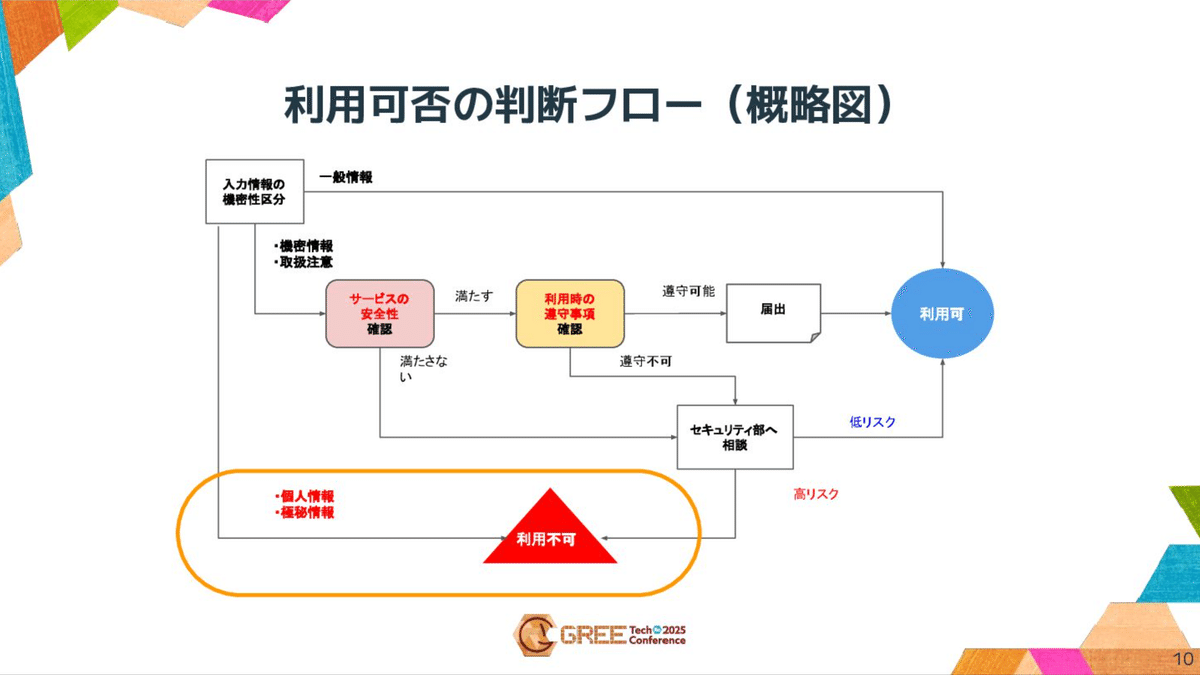

GREE "determines whether input to generative AI is allowed based on information classification."

Determining Whether Input to Generative AI is Allowed Based on Information Classification

This is a table that defines the permissibility of inputting to generative AI based on GREE's information classification (confidential information, secret information, attention required, general information) and the axis of "with personal information" and "without personal information."

Under this rule, "confidential information" (irrecoverable damage) is NG regardless of whether personal information is present or not. "Secret information" (damage that cannot be easily recovered) and "attention required" (little risk of damage) are OK only if "there is no personal information" and "the 'service safety' and 'compliance matters at the time of use' are observed."

The security department does not make the judgment, but the service owner judges based on the degree of confidentiality and importance.

Judgment flow of usability (schematic diagram): This is a judgment flow of usability according to the confidentiality classification of input information. Personal information and confidential information are immediately branched to "unavailable."

Why Shouldn't Personal Information and Confidential Information be Entered Easily?

Okuno explained "Why Shouldn't Personal Information and Confidential Information be Entered Easily?" from both legal and risk aspects.

1. Legal Provisions

Legal provisions should be "given priority over everything else" in terms of compliance, but in reality, there are "no legal provisions" regarding "confidential information." On the other hand, "personal information" is regulated by the Personal Information Protection Law. The points are Article 17 "Specify the purpose of use" and Article 18 "Use outside the purpose is NG," which is the same rule as normal personal information use. However, care must be taken regarding Article 27, "Prohibition of provision to third parties without the consent of the individual."

The Personal Information Protection Commission has determined that input to AI does not constitute "provision to a third party" if it is not used for machine learning, etc. However, GREE judges that "However, the behavior and actual handling of AI are opaque," and takes the stance that "acquiring consent as an internal rule is safe."

In conclusion, the law "does not prohibit it," but states that it is necessary to respond to the possibility of falling under "provision to a third party."

2. Risks Other Than Legal

Regarding the point that "Why shouldn't personal information and confidential information be entered easily?" even though the law states that "it does not constitute provision to a third party," Okuno organized the following four risks as reasons for NG in addition to the legal aspect.

Reason ①: "External Transmission = Uncontrollable"

The first point is that the input information is transmitted externally, and "complete tracking and deletion is difficult." The characteristic of generative AI is that the moment information is input into generative AI, we can no longer control it. When information is input into generative AI, it is transmitted externally at that point, so it becomes difficult to manage here the moment it is sent. In addition, the input information may be temporarily stored on the service provider's side, and even if there is a setting not to learn, it is not realistically possible to completely track where the sent information is stored or delete it later.

Reason ②: "Operation/Bug Risks"

The second reason is the risk of information exposure due to service-side defects. There is a risk of unintended leakage due to bugs in generative AI. In the past, there were services where chat histories were made public.

Reason ③: "Immaturity of Detection Measures"

The third point is that tools for detecting inappropriate use are immature, and "few AI services have audit logs." The reasons for this are that security tools dedicated to generative AI have not yet become widespread, and many generative AI services themselves do not have audit logs. Therefore, it is difficult to record and track usage, and it is unclear whether it is possible to respond appropriately in the event of an incident.

Reason ④: "Difficulty in Reliability Evaluation"

The fourth point is the difficulty in reliability evaluation, because "the public system for examining and certifying" based on security standards specific to generative AI "is still under development." International standards for AI already exist. ISO/IEC 42001 was established in 2023. This standard is a comprehensive mechanism for operating AI safely and reliably, including ethics and transparency, and although it is not standalone, it also includes security. However, although the standards have been developed, the system for conducting examinations and certifications is still under development, and the mechanism for objectively evaluating the reliability of AI services is not yet sufficient.

Conditions for "Conditional OK"

As described above, there is a risk that information input into generative AI cannot be controlled, cannot be deleted, and may leak. Furthermore, monitoring and authentication mechanisms to reduce such risks are not yet in place. That is why we have a cautious rule that personal information and confidential information, which are the most important information, should not be input into generative AI services at this time.

The GREE Group has set up several checkpoints as judgment materials.

First, whether they have certifications such as ISMS and P-mark. These are not certifications dedicated to generative AI, but they serve as a guideline for measuring whether the service provider has cleared certain standards from the perspective of information security and personal information protection. Incident history: whether there have been any major incidents in the past, and even if they have occurred, whether appropriate recurrence prevention measures have been taken at that time to prevent similar incidents from recurring.

Regarding confidentiality agreements, we confirm whether protecting the input data is clearly stated in the contract or policy. Specifically, we check the service provider's terms of use and privacy policy to see if confidentiality, data protection, and other clauses are clearly stated.

The next item, "Is the input data used for learning?", is a confirmation item unique to generative AI, and it can be said that it is the most important. We confirm whether it does not learn, or whether it is possible to set it so that it does not learn on the user's side. Finally, we confirm whether a contact point is in place, and whether there is a system in place to consult immediately in the event of a problem. By comprehensively confirming these aspects, we judge whether it is safe to input confidential information or information requiring attention into this service.

Another condition is compliance matters at the time of use. The safety of the service mentioned earlier was a confirmation item regarding whether the service itself is safe. On the other hand, this is a rule that users themselves must follow. This is also just an example, but the basic confirmation items are to re-emphasize the prohibition of inputting personal information and confidential information, to always use the company's account, that is, to refrain from using private accounts, and when incorporating generated code into business, to always conduct a review without using it as is.

Furthermore, when incorporating it into GREE Group services, we require each user department to appropriately manage API keys and accounts for management screens.

Risks of agent function

Recently, there has been an increase in generative AI services that use agent functions. Since this poses a risk that AI will automatically proceed with operations, we have also established rules such as setting it to confirm each time instead of automatically executing it, and not allowing dangerous operations such as deletion, modification, and use of API keys. In this way, in addition to the safety of the service, we also have rules that users must follow.

OpenAI's ultimate weapon!? 5 practical techniques! Can ChatGPT Atlas for MacOS write emails, shop, and even write note blogs!? I tried OpenAI's new product ChatGPT Atlas and verified its practicality for writing emails, shopping, and blog writing. www.aicu.jp

Until the Guidelines are Created

Next, I will talk about the flow of how the aforementioned guidelines were created. First, the security department created a draft of the guidelines. Specifically, we identified the risks associated with the use of generative AI and formulated measures and rules to reduce those risks. These include conditions such as how much information can be input and what kind of services can be used, as I have already mentioned.

Compliance Matters at the Time of Use

Rules that users must follow are also stipulated.

Especially when there is an "agent function", more cautious rules are set up, such as "set to 'confirm each time' and do not use the 'always approve' setting", and "operations with high risks such as deletion and configuration changes should be performed manually in principle".

Formulation Flow and Related Departments

The formulation of the guidelines was carried out in cooperation with related departments such as the security department, as well as the "legal department", "personal information management department", "risk management department", and "information system/infrastructure department".

The decision process followed the flow of "creating a policy proposal in the security department, cooperating with related departments, 'reviewing and approving the policy proposal at the information security committee', and finally 'making a decision at the management meeting'.

This means that "as a matter that greatly affects business, it was finally approved by the management meeting and decided as a company-wide usage policy." It can be imagined that this is not the introduction of AI due to a passing fad, but an important decision made by management, and is not a stand-alone play by individual engineers or management.

Issues and Efforts

Issues in Guideline Operation

There are six main issues in the operation of the guidelines.

Six issues in guideline operation are listed: "response to technological evolution", "balancing convenience and safety", "complexity of rules and lack of user understanding", "burden of consultation response", "immaturity of detection measures", and "lack of public certification".

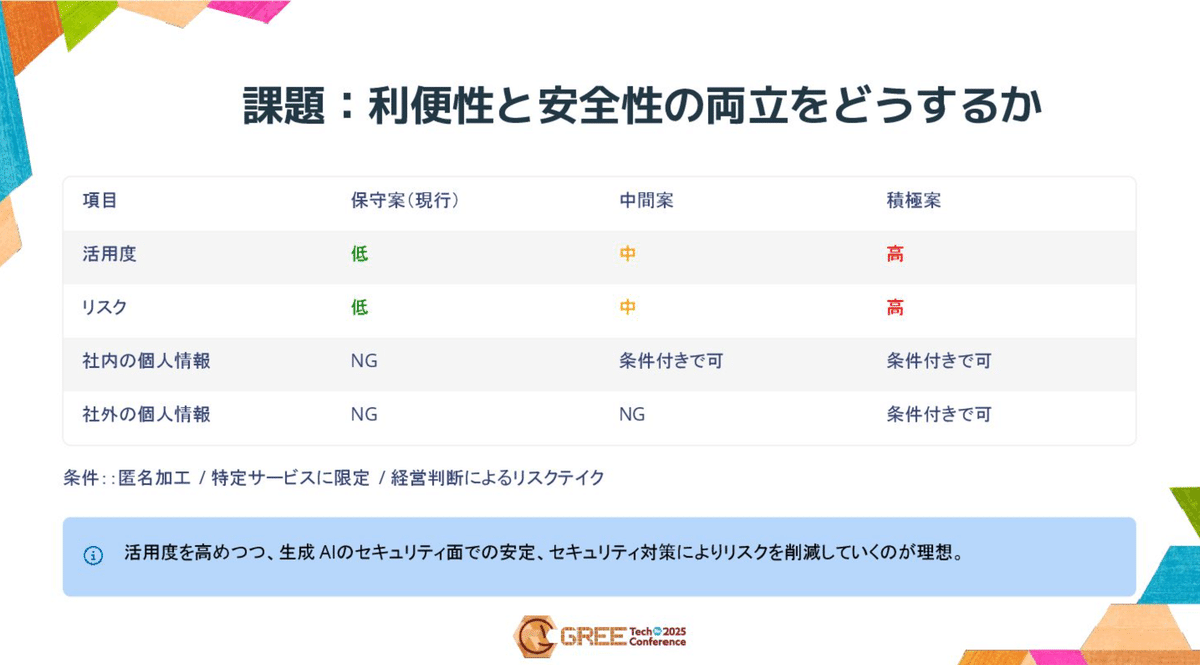

The biggest issue is "balancing convenience and safety". In particular, continuous consideration is required on how to balance the degree of use and risk regarding the handling of personal information.

Issue: How to balance convenience and safety: This is a table comparing three proposals (conservative proposal, intermediate proposal, and aggressive proposal) regarding the handling of personal information. The current "conservative proposal" prohibits personal information inside and outside the company, but in the future, the ideal is to "increase the degree of use while reducing risks through security measures."

Also, "complexity of rules" is an issue, and the actual judgment flow is complex, including cooperation with internal systems.

Four Efforts in Operation

In response to these issues, GREE is practicing four efforts.

User Efficiency

"Officially introduced generative AI is registered in the 'whitelist', and individual safety confirmation is omitted." Furthermore, "'list under confirmation' and 'NG list' are published to speed up self-judgment of usability."

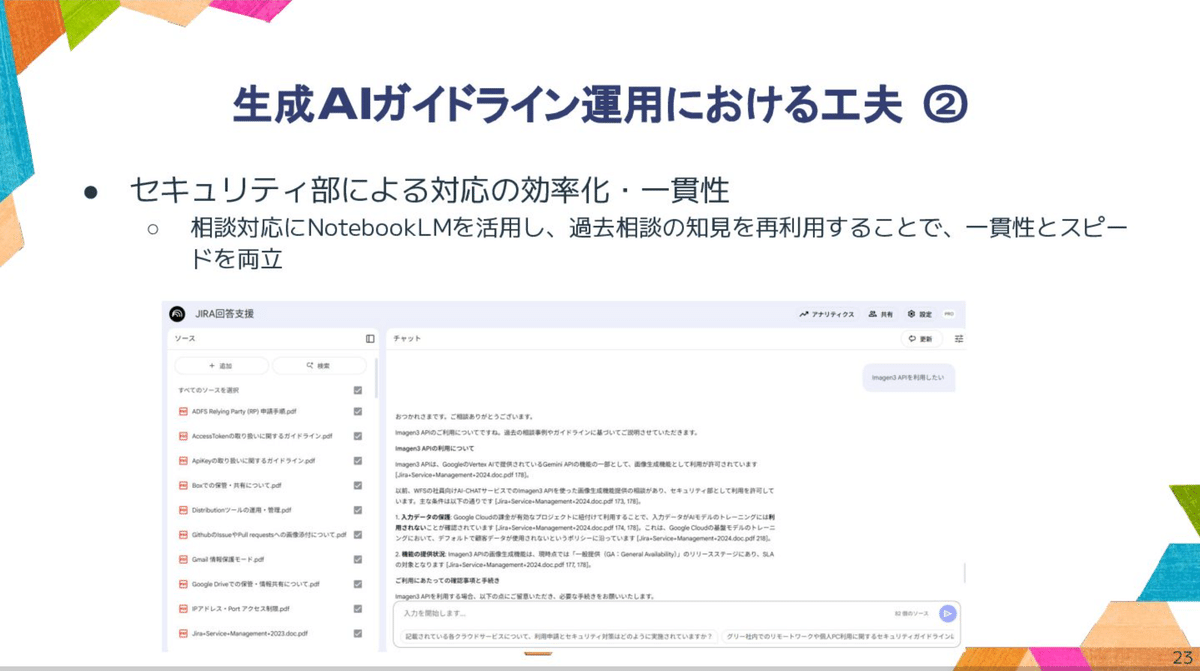

Efficiency and Consistency of Response

"NotebookLM is used for consultation response, and knowledge from past consultations is reused to achieve both consistency and speed." The slide shows an example of "JIRA response support".

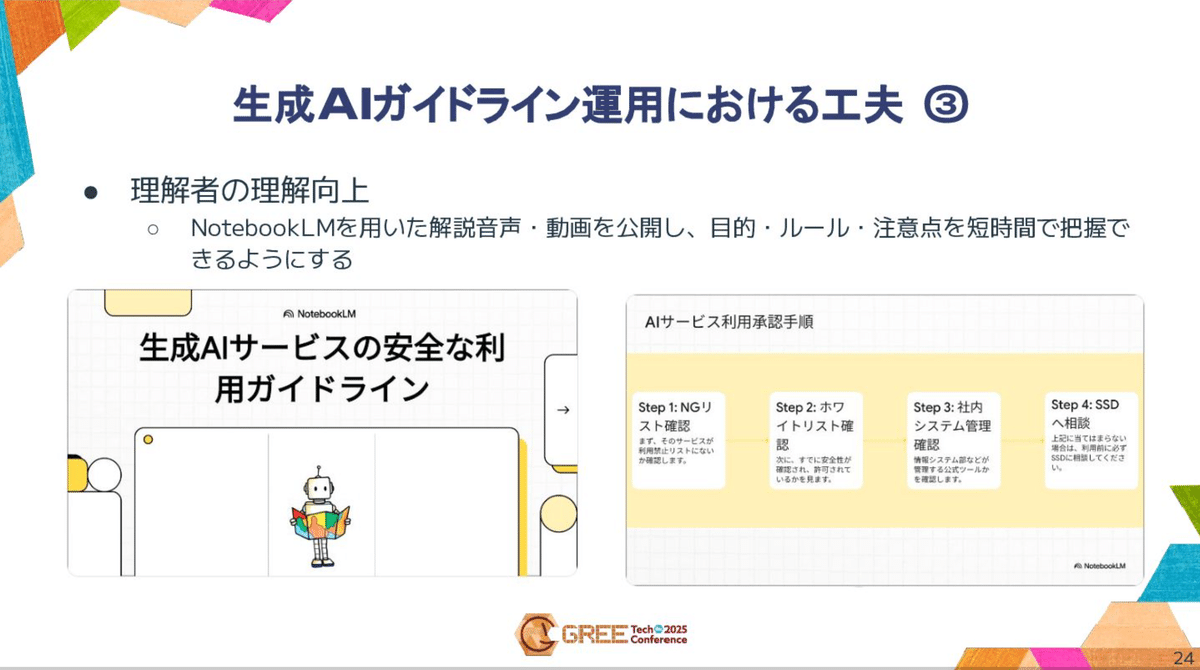

Improving User Understanding

An effort is also being made to "publish explanatory audio and videos using NotebookLM so that the purpose, rules, and points to note can be grasped in a short amount of time."

Collecting Other Company Examples

"Information exchange on guidelines/usage status is continued and updated as needed", and "usage trends and best practices outside the company are regularly shared with management to support decision-making."

Summary: To Use Generative AI Safely

Okuno listed the following five points as important for safe use:

-

Balance: "Correctly understand convenience and risks and maintain an appropriate balance"

-

Update: "Continuously collect technology trends, other company examples, and internal usage status and quickly reflect them in the rules"

-

Enlightenment and Burden Reduction: "Continuously disseminate information through education, internal blogs, etc. to raise understanding and compliance awareness"

-

Consistent and Rapid Response: "Use NotebookLM etc. to achieve both speed and consistency of judgment"

-

Understanding of Management: "Regularly report to the Information Security Committee, Management Meeting, etc. to gain understanding of investment in technical measures"

This concludes the lecture.

GREE Holdings, Inc. - Sustainability - Information Security Introducing the GREE Group's "Sustainability". hd.gree.net

Impressions of AICU AIDX Lab

"Rules are not to hinder usage, but the foundation for using it with peace of mind."

Although it was a very short lecture, I was impressed by how logically the guidelines for generative AI as of 2025 were depicted as the "current location" for enterprises to use. While the marketing of many AI information products has the purpose of "using AI" as the goal, and on the other hand, sufficient AI use is becoming widespread, the establishment of usable guidelines in the usual operations of IT service companies such as engineering, marketing, and customer management is unresolved. It is meaningful that the guidelines that others should follow and the process of their formulation have been made public. It also touches on the problems of the agent era, represented by ChatGPT Atlas, which can also perform operations on behalf of users.

AICU is active with the vision of "creating creators". This time, I was pleased to be able to discover "creators" of "guidelines for companies to use with peace of mind" from GREE, a long-established IT mega-venture, and also from departments such as internal audits and security.

If you have any examples of "more interesting initiatives" in your company, please let us know.

Finally, I would like to express my gratitude to everyone involved in writing this article.

Ms. Midori Okuno, Planning Team, Security Department, Business Technology Division, GREE Holdings, Inc.

Mr. Yutaka Sajima, GREE Co., Ltd.

Thank you for your cooperation in the interview!

Please also refer to this