Mastering ComfyUI Video Generation 'Wan2.2' Prompt Collection (3): Basics of Camera Movement

The video generation model "Wan2.2" that can be used for free with ComfyUI. AICU has obtained an official file like a "Prompt Secret Book" via China, and due to its enormous amount, we are delivering it in detail over several times.

Following the first "Style" and the second "Motion & Emotion," this third installment will explain "Camera Movement," which dramatically improves the quality and storytelling of the generated video.

Prompt Collection to Master Wan2.2 (1) Style

Prompt Collection to Master Wan2.2 (1) Style Introducing a collection of prompts for the video generation model "Wan2.2" that can be used for free with ComfyUI. Explaining the details and usage examples of each style. www.aicu.jp

Prompt Collection to Master Wan2.2 (2) Motion & Emotion

Prompt Collection to Master Wan2.2 (2) Motion & Emotion Introducing a collection of prompts for the video generation model "Wan2.2." This time focusing on motion & emotion. Details continue. www.aicu.jp

No matter how beautiful the style or character is, the appeal of the video will be halved if the camera work is monotonous. Master the camera instructions that Wan2.2 understands and aim for movie-like production.

Learn from the Latest ComfyUI Basic Template

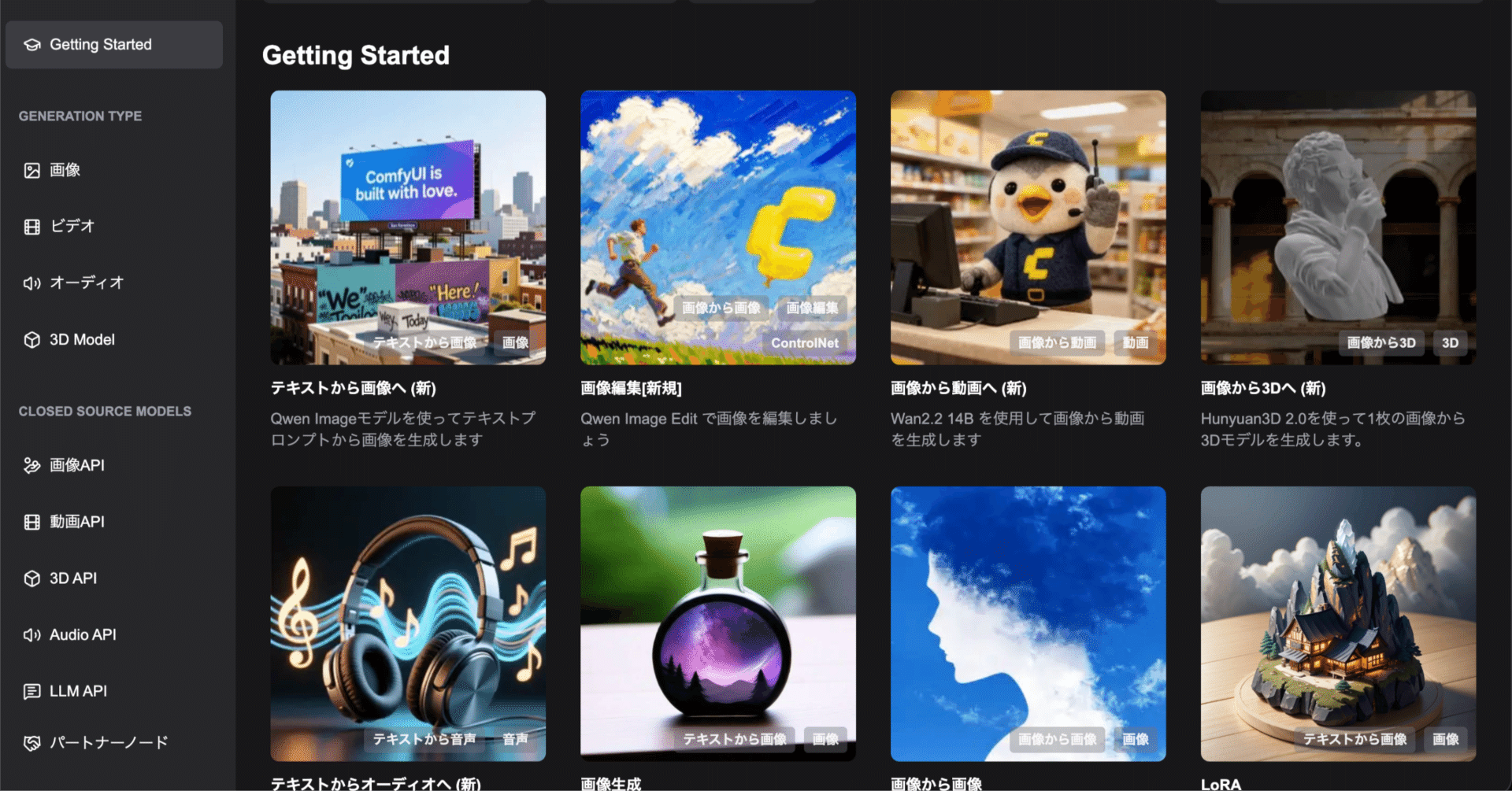

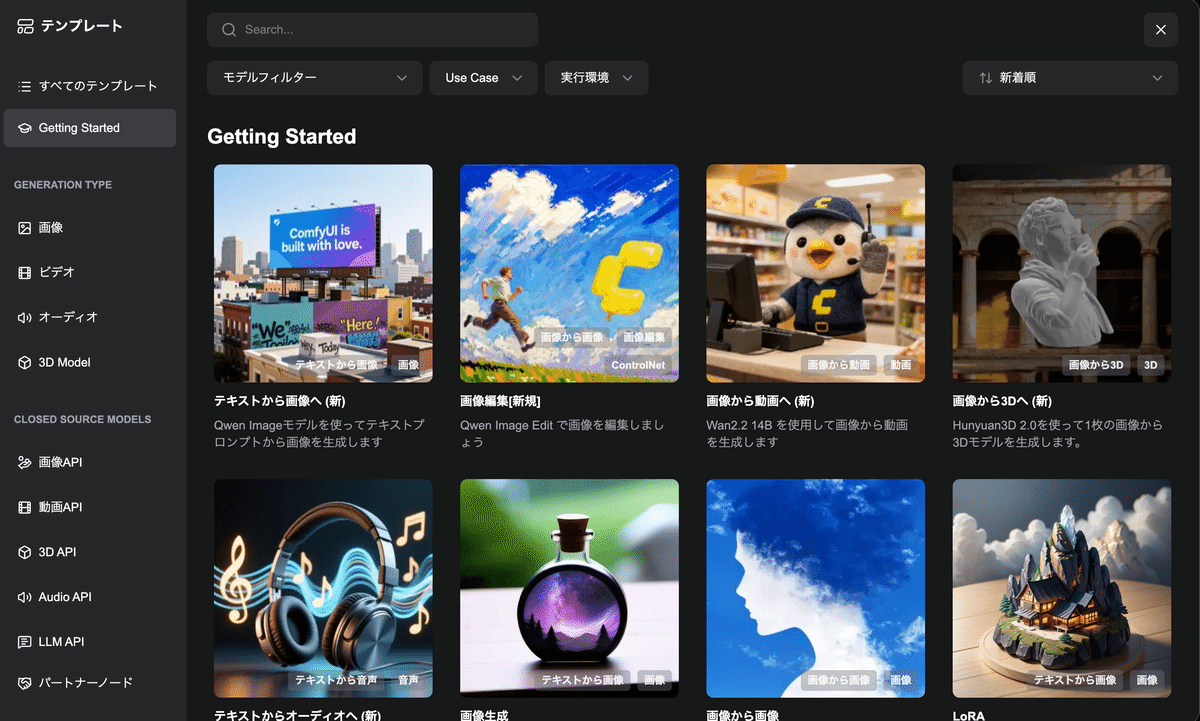

This explanation uses the latest ComfyUI (0.3.76), template "Getting Started"'s "Image to Video (New)".

As an update from the ComfyUI Purple Book, video generation related aspects have been greatly updated.

Image/Video Generation AI ComfyUI Master Guide (Generative AI Illustration) __ j.aicu.ai 3,850 円 (As of November 18, 2025 23:41 Click here for details) Buy on Amazon.co.jp

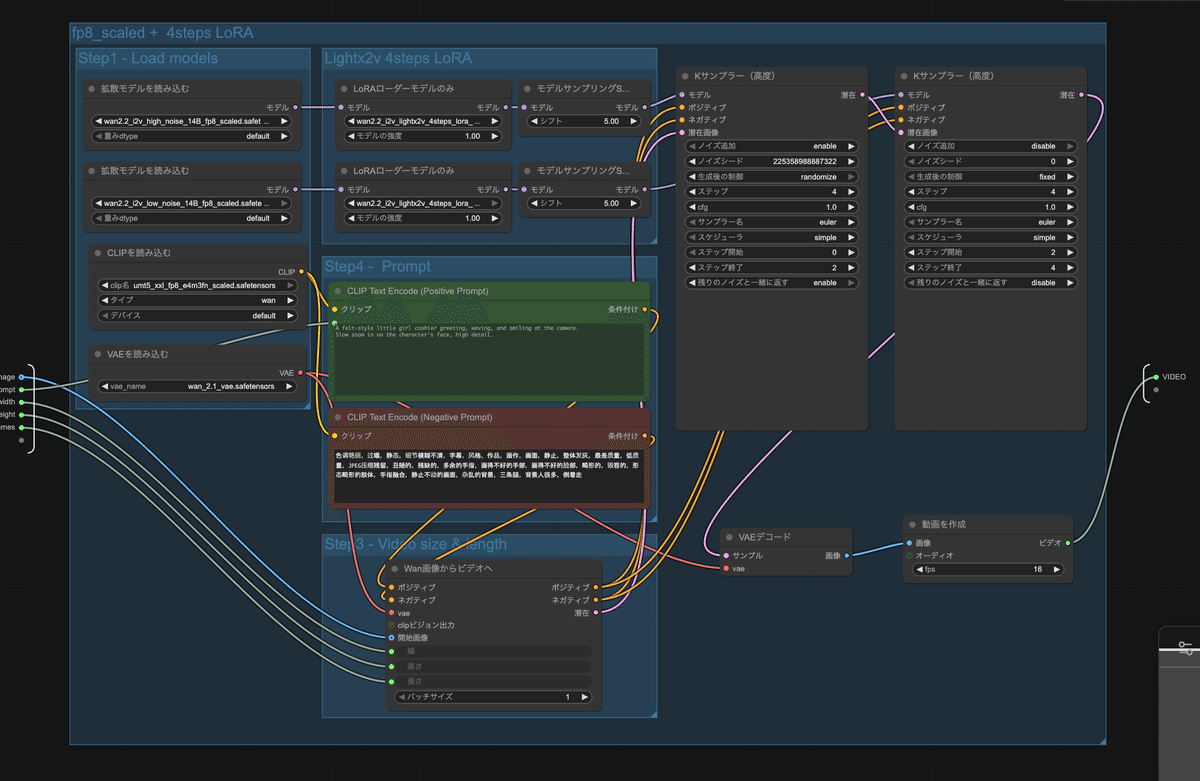

Wan2.2 Video Generation ComfyUI Official Native Workflow

Wan 2.2 is a new generation multimodal generation model announced by WAN AI. This model employs an innovative MoE (Mixture of Experts) architecture consisting of expert models for high and low noise. By dividing expert models according to noise reduction time steps, higher quality video content can be generated.

Wan 2.2 has three core features. Movie-level aesthetic control deeply integrates the aesthetic standards of the professional film industry and supports multi-dimensional visual control such as lighting, color, and composition. Large-scale and complex motions easily restore various complex motions and improve the smoothness and controllability of the motion. Accurate semantic compliance is excellent for generating complex scenes and multiple objects, and better restores the user's creative intent. This model supports multiple generation modes, such as text-to-video conversion and image-to-video conversion, and is suitable for various application scenarios such as content creation, artistic creation, education, and training.

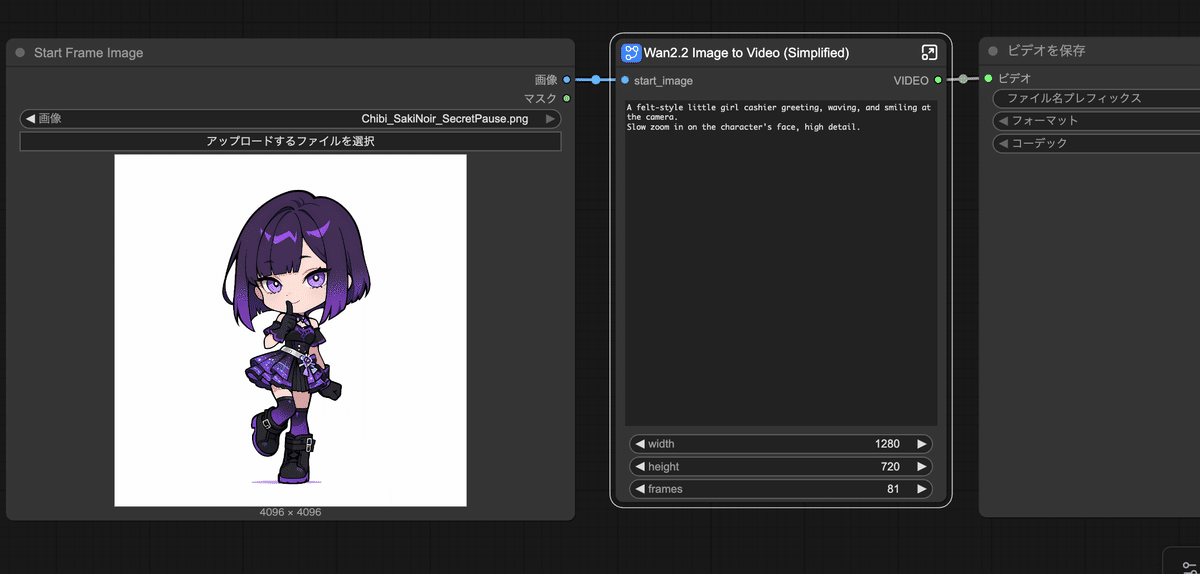

In this tutorial, you will learn about the Wan2.2 environment and camera work using Google Colab. You can also learn about the Wan2.2 Image to Video (Subgraph) node, which is a "subgraph" converted from the Wan2.2 Image to Video workflow.

Using subgraphs, such a huge workflow can be

It can be expressed in this size.

Also, Wan2.2 related workflows require a large amount of VRAM (20GB or more). If you are running this workflow locally, make sure you have enough VRAM. AICU offers several solutions.

[Notice] AICU Lab+ continuously disseminates the latest information and verification results of such generative AI. In addition, Official study group for the book "Image/Video Generation AI ComfyUI Master Guide" and ComfyJapan study group are also being developed for those who want to learn from the basics.