Alibaba Cloud "Wan 2.6" Announced: Evolved Audio Generation and Visual Beauty - $1,000 Video Contest!

On December 17, 2025, Alibaba Cloud will announce the next-generation visual infrastructure model "Wan 2.6." This model features high-definition video generation, audio synchronization, and advanced prompt following capabilities, dramatically expanding the expressive range of creators. A $1,000 prize contest will also be held.

AiCuty's video explorer, Saki here.

It's the season when cold winds blow through the city. But it seems like a new heatwave is blowing in the rendering world. Today, I have news that we video creators can't miss.

Alibaba Cloud's next-generation visual infrastructure model, "Wan 2.6". An evolution that breathes "sound" and "story" into video, as if breaking the silence. Shall we dive a little deeper together?

That day is December 17th. The world watches "Wan"

First, mark it on your calendar. On December 17, 2025, the "WAN2.6 LAUNCH LIVESTREAM" will be held. This is not just an update. It's a challenge to "cinema grade" that Alibaba Cloud's research team confidently sends out, capable of withstanding film production and commercial creative work.

Fortunately, a Japanese live session is also available. As a Japanese creator, you can't afford to miss this wave, right?

[Register on Calendar] WAN2.6 Launch - Japanese Live Broadcast 2025/12/17 16:00- https://www.alibabacloud.com/en/events/wan-model-launch?_p_lc=1

From "video generation only" to "audible generation"

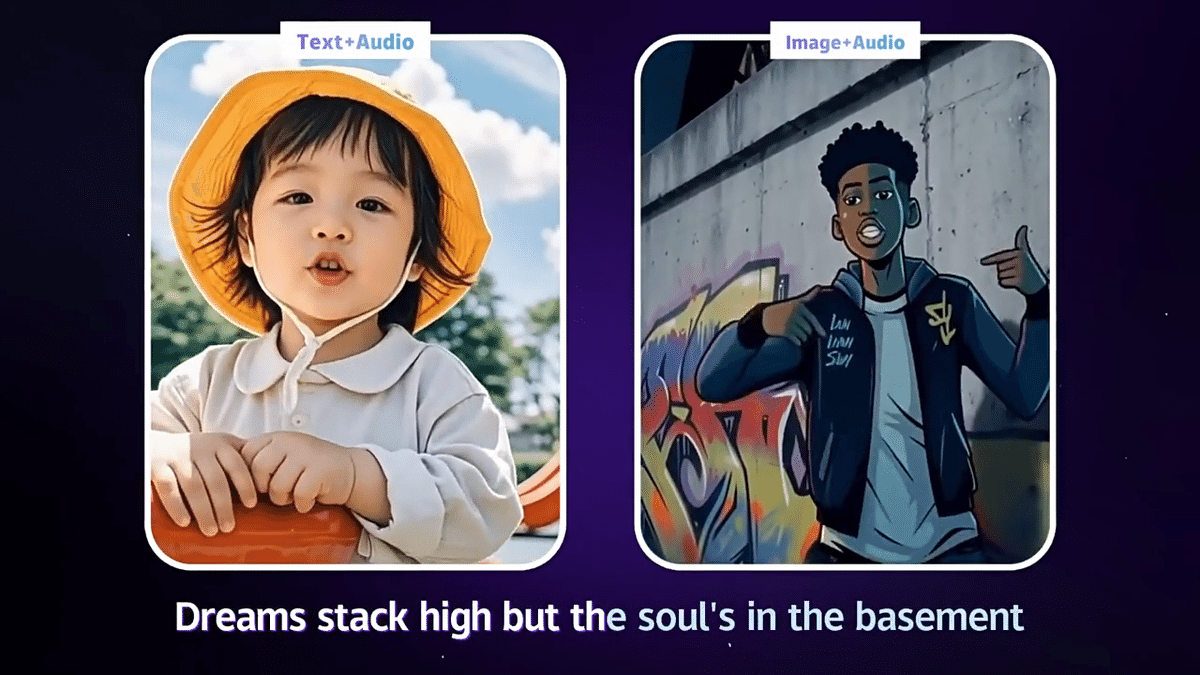

The biggest feature of Wan 2.6 that can be read from the published Wan2.5 preview information is the integration of "Audio Generation".

https://video-intl.alicdn.com/2022/%E8%A7%86%E9%A2%91-wan.mp4

Until now, when we created videos with AI, it was common sense to generate the video and sound separately, and then combine them with editing software. But it seems that Wan 2.6's architecture supports audio generation synchronized with visuals. The current Wan2.5-preview specs are...

-

Text-to-Video / Image-to-Video: Long-form generation of 10 seconds is now possible.

-

Improved instruction following: The prompt understanding that was excellent even in the previous "Wan 2.2" seems to be further enhanced.

-

Pursuit of reality: The expression of physical laws and light is approaching reality, or an ideal cinematic reality.

Practice: Saki-style cinematic prompts

I hope that the theory in the "Wan 2.2 Prompt Guide" that I have already obtained at AICU will still be valid in the new model. While being aware of the three elements of "subject," "scene," and "motion," and also being aware of the theme of this contest, "business storytelling," I tried putting together a prompt.

For example, if you're depicting delicate beauty, like in a cosmetics commercial.

Prompt Suggestion: In a serene forest clearing, a sleek black shampoo bottle stands gracefully atop a mound of vibrant green moss, surrounded by a bouquet of wildflowers in a riot of colors. The camera glides from a low angle, smoothly circling the bottle to reveal delicate petals dancing in a gentle breeze. Sunlight filters through the trees, casting a warm glow... The scene culminates in a lingering shot, the bottle at the center, suggesting beauty and purity.

I hope that the concept of "Camera Movement" in the Wan 2.2 guide, especially instructions such as “The camera glides from a low angle,” will be executed more smoothly in Wan 2.6.

For Developers: Access to Model Studio

"Will it work with ComfyUI?"

You're probably thinking that, right?

At this time, it will be mainly used in the official "Model Studio", but Alibaba Cloud models can often be controlled through the Python SDK (DashScope). If you're an engineering-minded person who wants to tap into the API, you should prepare not only the Alibaba Cloud account but also the large-scale model "Bailian" account.

https://bailian.console.alibabacloud.com/

Is the API manual here? https://modelstudio.console.alibabacloud.com/?tab=doc#/doc/?type=model&url=2873061

I'm also looking forward to ComfyUI support. I'll share it at the AICU Lab+ study group when that happens.

"AI Video for Business"

$1,000 prize video contest!

Once you get a new tool, the creator's nature is to want to try it out. Alibaba Cloud is holding "The AI Wan Challenge".

-

Theme: AI Video for Business (brand story, product demo, etc.)

-

Conditions: Video of 15 seconds or more (using Wan)

-

Prize: Grand Prize $1000

-

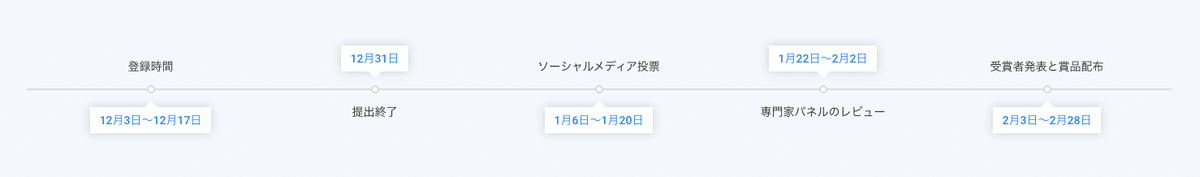

Timeline Registration Time: December 3 - December 17 Deadline: December 31, 2025 Voting: January 6 - January 20 Expert Panel Review: January 22 - February 2

Timeline

Timeline

You don't have to think too rigidly, even if it's "business". A story that moves someone's heart, a magic that brings out the charm of a product. You can draw it with AI. And the entry period closes on the day of this live stream. Contest details and registration here

It seems that you can get access to free video materials (in English) by answering the questionnaire.

AI Design Series: This course is related to Alibaba Cloud AI Designer. You need to purchase a certification package to complete all the lessons required to obtain certification.

Course introduction Chapter 1: AI in Design Free Lesson 1: The AI Design Revolution: Exploring a New Wave of Creativity Free Lesson 2: Discover the Power of ComfyUI: Open Source AI Model + Wan Chapter 2: Turning Text into Images Free Lesson 3: ComfyUI Basics: Text-to-Image Workflow with Effective Prompts Free Lesson 4: 480p to 4k: Upscaling Images with ComfyUI Lesson 5: Complete the Poster: Create a Professional Poster with ComfyUI Lesson 6: Style Chameleon: Conveying Custom Styles with LoRA Chapter 3: Image to Image Free Lesson 7: ComfyUI Basics: Image-to-Image Workflow Lesson 8: Add Flair with LoRA: Transfer Style in Image-to-Image Workflow Lesson 9: Style to Precision: LoRAs + ControlNet in ComfyUI Workflow Chapter 4: Wan in ComfyUI Free Lesson 10: WAN2.1 for Beginners: Bringing Stories to Life Free Lesson 11: WAN2.1 Introduction: Bringing Images to Life Lesson 12: WAN2.1 Advanced Edition: Rendering the Overgrowth Effect of Autoregression Lesson 13: WAN2.1 Advanced Edition: Generating 360° Views from 2D Characters Chapter 5: One Video Generation Free Lesson 14: First Look at Wan: Exploring the Wan Web Interface Free Lesson 15: Step One: About Image-to-Video Conversion, First and Last Frames, Special Effects Free Lesson 16: Ad-Wan-ced: Mastering Reference, Repaint, and Inpaint Chapter 6: Case Studies Free Lesson 17: i Light Singapore 2025: Connecting with the Digital Identity of the Future (Part 1/2) Lesson 18: i Light Singapore 2025: Taking a Step Closer to the Future with AI (Part 2/2) Chapter 7: ComfyUI x Wan Advanced Features Lesson 19: Controllable Characters: Image Reference, Pose, and Depth Control with Wan2.1 VACE Lesson 20: Advanced Video Editing: Inpainting and Outpainting with Wan2.1 VACE Lesson 21: Style Fine-Tuning: Training Custom LoRAs with Wan2.2 and ModelScope Chapter 8: Making a Sci-Fi Short Film with Wan Lesson 22: New Features in Wan 2.2: Dynamic Video Generation Evolved Like Never Before Lesson 23: New Features in Wan 2.2: Animating Particles in Next-Generation Visual Dimensions Lesson 24: Making a Sci-Fi Short Film with Wan: Building the Story Framework (Part 1/3) Lesson 25: Making a Sci-Fi Short Film with Wan: Generating Storyboards and Video Assets (Part 2/3) Lesson 26: Making a Sci-Fi Short Film with Wan: Designing Shot Transitions and Sound (Part 3/3) Chapter 9: Introduction to AI Music Video Production Lesson 27: Making an AI Music Video: The Birth of an AI Idol (Part 1/3) Lesson 28: Making an AI Music Video: AI-Generated Lyrics and Music (Part 2/3) Lesson 29: Making an AI Music Video: Lip-syncing with WAN S2V (Part 3/3)

Quite substantial content! Unfortunately it is in English. It's similar to AICU study sessions and certified certification content!

Summary

In December, the city is shining with illuminations, but there is even more infinite light spreading inside the monitor. The "fusion of sound and video" brought about by Wan 2.6. It may be a passport to a future where we can all be filmmakers and composers.

Remember the "Character Emotion" CSV in the Wan 2.2 guide. Put emotions such as "Angrily" and "Happy" into your products and brand stories.

By the car window on a rainy day, a young man leans against the cold glass. His gaze is empty and unfocused as he stares sadly outside. A single tear, without warning, slips from his reddened eye.

A young girl pushes open a door, a surprised expression on her face as she sees a completely unexpected scene.

I'll wrap it up around here, thinking about editing. As a creator, it's not just about getting pretty pictures, it's about "Whether you can perform as intended." I want to see this point on the 17th's live stream. It's your turn to create next. Buzz is good, but sometimes I want to sit down and watch videos with stories. See you in the next news.

AiCuty video manager Saki Noir, created by people and AI. See you soon💜